AI products rarely fail because the models are wrong—they fail because nobody defined what right even means. Here’s why disciplined evaluation turns demos into dependable systems.

The Demo-to-Production Gap

Every AI demo looks impressive. The model answers questions fluently, generates plausible content, and handles edge cases with apparent ease. Then it goes to production, and everything unravels.

The gap isn’t about model capability. It’s about evaluation rigor. Demo environments are forgiving. Production environments are not.

Defining “Right”

Before you can evaluate an AI system, you need to define success. This sounds obvious but rarely happens with precision:

- What output quality means - Not “good enough” but specific, measurable criteria

- What failure looks like - The mistakes that matter versus acceptable imperfections

- What tradeoffs are acceptable - Speed vs. quality, cost vs. accuracy

Without these definitions, evaluation becomes subjective. “The model seems to work” is not a measurement.

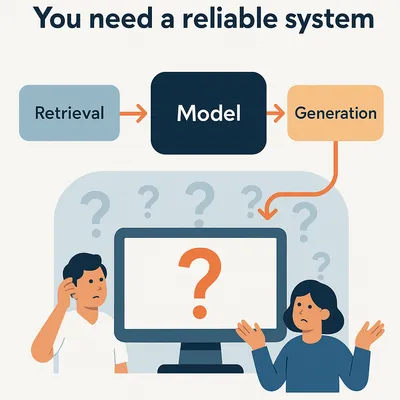

The Evaluation Stack

Good AI requires evaluation at multiple layers:

Component Evaluation

Test individual pieces in isolation:

- Retrieval accuracy

- Embedding quality

- Prompt effectiveness

System Evaluation

Test the integrated pipeline:

- End-to-end response quality

- Latency under realistic load

- Failure recovery behavior

User Evaluation

Test with actual usage patterns:

- Task completion rates

- User satisfaction scores

- Adoption and retention metrics

Building Evaluation Infrastructure

Evaluation infrastructure is unglamorous but essential:

- Golden datasets - Curated examples with known-correct outputs

- Automated scoring - Consistent measurement without manual review bottlenecks

- Regression testing - Catch quality degradation before deployment

- A/B frameworks - Compare changes against production baselines

The Continuous Loop

Evaluation isn’t a gate you pass once. It’s a continuous process:

- Monitor production quality metrics

- Detect when performance drifts

- Investigate root causes

- Improve with targeted changes

- Validate improvements before deployment

This loop turns AI from a fragile demo into a reliable system.

The Hidden Work

The hidden work behind good AI is:

- Writing test cases that cover real failure modes

- Building automated evaluation pipelines

- Maintaining golden datasets as requirements evolve

- Instrumenting systems to measure what matters

- Creating feedback loops from users to developers

None of this work is visible in the final product. But it’s what separates AI that works from AI that sort of works sometimes.

Getting Started

Start with three questions:

- What does success look like? Define specific, measurable criteria.

- How will you measure it? Build automated evaluation for key metrics.

- How will you know when it breaks? Set up monitoring and alerts.

Answer these, and you’ve taken the first step from demo to dependable system.